Christopher Markou

PhD Candidate, Lecturer, Faculty of Law | Associate Fellow, Leverhulme Centre for the Future of Intelligence, University of Cambridge

Education

The University of Cambridge, Faculty of Law, PhD

The University of Manchester, School of Law, LLB (1st)

The University of Toronto, St, Michael's College, MA (Honours)

The University of Toronto, St, Michael's College, BA (Honours)

Awards

Social Science and Humanities Research Council of Canada (SSHRC) Doctoral Fellowship, 2014-2018

Programme in European Private Law, Postgradute Scholarship, 2016-2017

Wright Rogers Scholarship, The University of Cambridge, 2014-2018

Faculty of Law Bursary, The University of Cambridge, 2014-2018

Professional Associations & Memberships

The Law Society of Upper Canada

The Honourable Society of the Inner Temple

National Union of Journalists

Fields of research

Technology Law

Critical Techology Studies

Artificial Intelligence

Social Systems Theory

Complexity Theory

Philosophy of Technology

Science and Technology Studies

Evolutuionary Economics

Legal Autonomy and Technological Change: A Systems Theoretical Analysis of Artificial Intelligence

Summary

The machines rise, mankind falls. It’s a science fiction trope nearly as old as machines themselves. The dystopian scenarios spun around this theme can make for compelling entertainment but it’s difficult to see them as serious threats. Nonetheless, artificially intelligent systems continue developing apace. Self-driving cars share our roads; smartphones manage our lives; facial recognition systems help catch bad guys and sophisticated algorithms dethrone our Jeopardy and Go champions. Developing these technologies could obviously benefit humanity. But, then—don’t most dystopian sci-fi stories start out this way?

My thesis addresses the question of whether, and if so, to what extent, the legal system can respond to risks and challenges posed by increasingly complex and legally problematic technological change. It draws on theories of legal and social evolution—particularly the Social Systems Theory of Niklas Luhmann—to explore the notion of a ‘lag’ in the ability of the legal system to respond to technological change and ‘shocks’. It evaluates the claim that the legal system’s lagged response to technological change is necessarily a deficit of its functioning, and hypothesises that it is instead an essential characteristic of the legal system’s autonomy, and of the processes through which the law ameliorates the potentially harmful or undesirable outcomes of science and technology on society and the individual.

It is clear that the legal system can respond to technological change, but only insofar as it can adjust its internal mode of operation, which takes time, and is constrained by the need to maintain legal autonomy. The visible signs of this adjustment take the form, among other things, of conceptual evolution in the face of new technological changes and risks. This process is observable in many historical precedents that are examined to demonstrate the adaptive capacity of the legal system in response to technological change. While it is true that legal systems are comparatively inflexible in response to new technologies, due to the rigidity of legal doctrine and reliance upon precedent and analogy in legal reasoning, an alternative outcome is possible; namely the disintegration of the boundary between law and technology and the consequential loss of legal autonomy. A change of this kind would be signalled by what some have identified as the emergence of a technological ordering—or a ‘rule of technology’—that displaces and potentially subsumes the rule of law.

My thesis evaluates evidence for these two scenarios—the self-renewing capacity of the legal system, on the one hand, or its disintegration in response to technological change, on the other. These opposing scenarios will be evaluated using Artificial Intelligence (AI) as a case study to examine the co-evolutionary dynamics of law and technology and to assess the extent to which the legal system can shape, and be shaped by, technological change. It explores the nature of the AI, its benefits and drawbacks, and argues that its proliferation may require a corresponding shift in how the law operates. As AI develops, centralised authorities such as government agencies, corporations, and indeed legal systems, may lose the ability to coordinate and regulate the activities of disparate persons through existing regulatory means. Consequentially, there is a pressing need to understand how AI technology interfaces with existing legal frameworks and how the law must adapt in response to the creation of decentralised organisations that has yet to be explored by current legal theory.

Supervisors

Professor Simon Deakin (Peterhouse)

Professor Ross Anderson (Trinity)

London Uber ban: regulators are finally catching up with technology

Sep 26, 2017 00:54 am UTC| Insights & Views Technology Law

In what could be a major blow to the gig economy, Transport for London (TFL) has refused to renew Ubers licence to operate in the UK capital its largest European market on the grounds that its approach and conduct...

Why using AI to sentence criminals is a dangerous idea

May 16, 2017 14:03 pm UTC| Insights & Views Law Technology

Artificial intelligence is already helping determine your future whether its your Netflix viewing preferences, your suitability for a mortgage or your compatibility with a prospective employer. But can we agree, at least...

We could soon face a robot crimewave ... the law needs to be ready

Apr 11, 2017 13:41 pm UTC| Technology Law

This is where we are at in 2017: sophisticated algorithms are both predicting and helping to solve crimes committed by humans; predicting the outcome of court cases and human rights trials; and helping to do the work done...

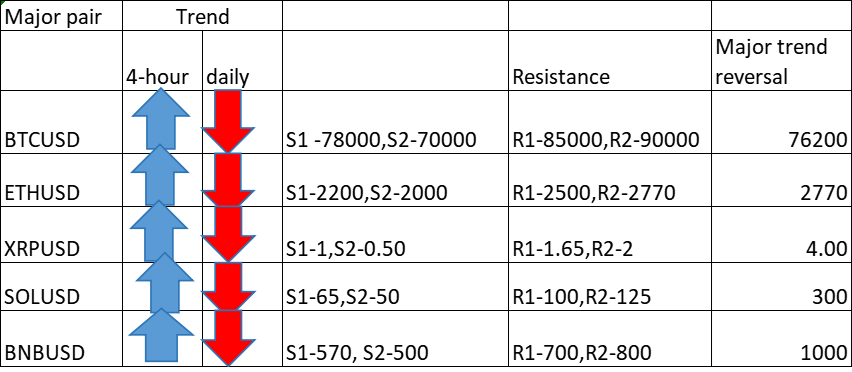

- Market Data