We ask experts for advice all the time. A company might ask an economist for advice on how to motivate its employees. A government might ask what the effect of a policy reform will be.

To give the advice, experts often would like to draw on the results of an experiment. But they don’t always have relevant experimental evidence.

Collecting expert predictions about research results could be a powerful new tool to help improve science - and the advice scientists give.

Better science

In the past few decades, academic rigour and transparency, particularly in the social sciences, have greatly improved.

Yet, as Australia’s Chief Scientist Alan Finkel recently argued, there is still much to be done to minimise “bad science”.

He recommends changes to the way research is measured and funded. Another increasingly common approach is to conduct randomised controlled trials and pre-register studies to avoid bias in which results are reported.

Expert predictions can be yet another tool for making research stronger, as my co-authors Stefano DellaVigna, Devin Pope and I argue in a new article published in Science.

Why predictions?

The way we interpret research results depends on what we already believe. For example, if we saw a study claiming to show that smoking was healthy, we would probably be pretty sceptical.

If a result surprises experts, that fact itself is informative. It could suggest that something may have been wrong with the study design.

Or, if the study was well-designed and the finding replicated, we might think that result fundamentally changed our understanding of how the world works.

Yet currently researchers rarely collect information that would allow them to compare their results with what the research community believed beforehand. This makes it hard to interpret the novelty and importance of a result.

The academic publication process is also plagued by bias against publishing insignificant, or “null”, results.

The collection of advance forecasts of research results could combat this bias by making null results more interesting, as they may indicate a departure from accepted wisdom.

Changing minds

As well as directly improving the interpretation of research results, collecting advance forecasts can help us understand how people change their minds.

For example, my colleague Aidan Coville and I collected advance forecasts from policymakers to study what effect academic research results had on their beliefs. We found in general they were more receptive to “good news” than “bad news” and ignored uncertainties in results.

Forecasts can also inform us as to which potential studies could most improve policy decisions.

For example, suppose a research team has to pick one of ten interventions to study. For some of the interventions, we are pretty sure what a study would find, and a new study would be unlikely to change our minds. For others, we are less sure, but they are unlikely to be the best intervention.

If predictions were collected in advance, they could tell us which intervention to study to have the biggest policy impact.

Testing forecasts

In the long run, if expert forecasts can be shown to be fairly accurate, they could provide some support for policy decisions where rigorous studies can’t be conducted.

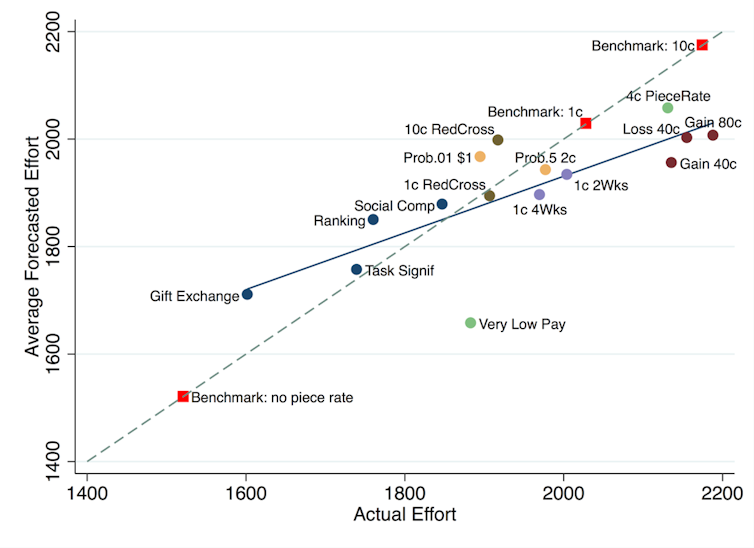

For example, Stefano DellaVigna and Devin Pope collected forecasts about how different incentives change the amount of effort people put into completing a task.

As you can see in the graph below, the forecasts were not perfect (a dot on the dashed diagonal line would represent a perfect match of forecast and result). But there does appear to be some correlation between the aggregated forecasts and the results.

Reproduced with permission from DellaVigna and Pope.

A central place for forecasts

To make the most of forecasts of research results, they should be collected systematically.

Over time, this would help us assess how accurate individual forecasters are, teach us how best to aggregate forecasts, and tell us which types of results tend to be well predicted.

We built a platform that researchers can use to collect forecasts about their experiments from researchers, policymakers, practitioners, and other important audiences. The beta website can be viewed here.

While we are focusing first on our own discipline – economics – we think such a tool should be broadly useful. We would encourage researchers in any academic field to consider collecting predictions of research results.

The Social Science Prediction Platform, https://socialscienceprediction.org/.

There are many potential uses for predictions of research results beyond those described here. Many other academics are also exploring this area, such as the Replication Markets and repliCATS projects that are part of a large research initiative on replication.

The multiple possible uses of research forecasts gives us confidence that a more rigorous and systematic treatment of prior beliefs can greatly improve the interpretation of research results and ultimately improve the way we do science.

China vs. NASA: The New Moon Race and What's at Stake by 2030

China vs. NASA: The New Moon Race and What's at Stake by 2030  Kevin Warsh Faces Early Fed Test as Inflation Risks Challenge Rate-Cut Expectations

Kevin Warsh Faces Early Fed Test as Inflation Risks Challenge Rate-Cut Expectations  Silver Sheds Gains in Gold’s Wake as Bears Probe Key $74.50 Support; Sell-on-Rallies Eyes $70

Silver Sheds Gains in Gold’s Wake as Bears Probe Key $74.50 Support; Sell-on-Rallies Eyes $70  US Economy Fueled by AI Investment Faces Rising Risks Ahead of Fed Meeting

US Economy Fueled by AI Investment Faces Rising Risks Ahead of Fed Meeting  Blue Origin New Glenn Rocket Explodes During Launch Pad Test, Delaying Space Ambitions

Blue Origin New Glenn Rocket Explodes During Launch Pad Test, Delaying Space Ambitions  JPMorgan Sees Biotech Sector at Turning Point, Upgrades Top Pharma Stocks

JPMorgan Sees Biotech Sector at Turning Point, Upgrades Top Pharma Stocks  SpaceX Prioritizes Moon Mission Before Mars as Starship Development Accelerates

SpaceX Prioritizes Moon Mission Before Mars as Starship Development Accelerates  Morgan Stanley Names Top AI Security and Data Center Stocks for 2026

Morgan Stanley Names Top AI Security and Data Center Stocks for 2026  Neuralink Plans High-Volume Brain Implant Production and Fully Automated Surgery by 2026

Neuralink Plans High-Volume Brain Implant Production and Fully Automated Surgery by 2026  Global Bond Selloff Pressures Stocks as Rising Oil Prices Fuel Inflation Fears

Global Bond Selloff Pressures Stocks as Rising Oil Prices Fuel Inflation Fears  Blue Origin’s New Glenn Achieves Breakthrough Success With First NASA Mission

Blue Origin’s New Glenn Achieves Breakthrough Success With First NASA Mission